Advances In Video Compression System Using Deep Neural Network:

A Review And Case Studies

Dandan Ding, Zhan Ma, Di Chen, Qingshuang Chen, Zoe Liu and Fengqing Zhu

Abstract

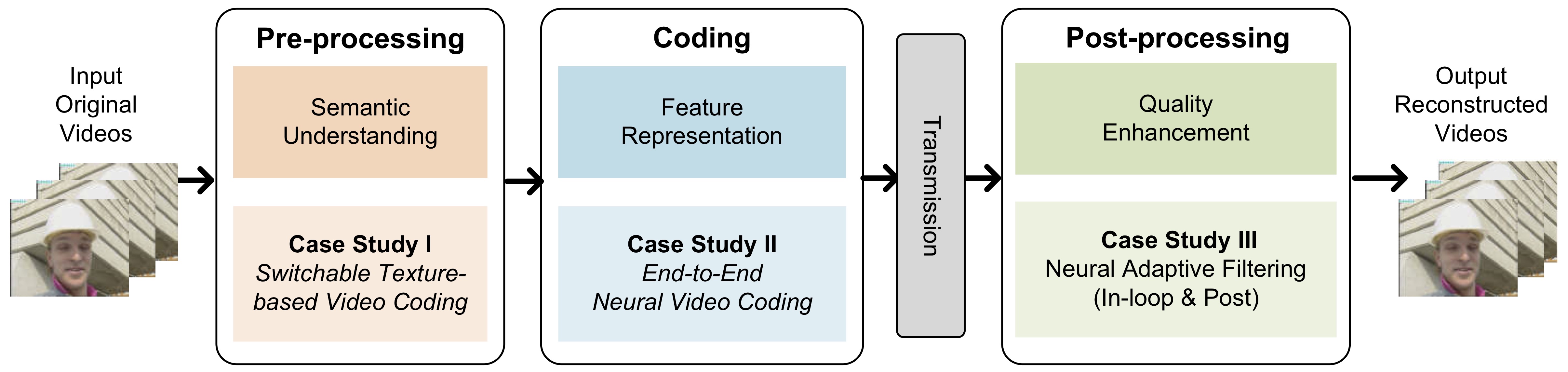

Significant advances in video compression system have been made in the past several decades to satisfy the nearly exponential growth of Internet-scale video traffic. From the application perspective, we have identified three major functional blocks including pre-processing, coding, and post-processing, that have been continuously investigated to maximize the end-user quality of experience (QoE) under a limited bit rate budget. Recently, artificial intelligence (AI) powered techniques have shown great potential to further increase the efficiency of the aforementioned functional blocks, both individually and jointly. In this article, we review extensively recent technical advances in video compression system, with an emphasis on deep neural network (DNN)-based approaches; and then present three comprehensive case studies. On pre-processing, we show a switchable texture-based video coding example that leverages DNN-based scene understanding to extract semantic areas for the improvement of subsequent video coder. On coding, we present an end-to-end neural video coding framework that takes advantage of the stacked DNNs to efficiently and compactly code input raw videos via fully data-driven learning. On post-processing, we demonstrate two neural adaptive filters to respectively facilitate the in-loop and post filtering for the enhancement of compressed frames. Finally, a companion website hosting the contents developed in this work can be accessed publicly at https://purdueviper.github.io/dnn-coding/.

Downloads

- Paper

- Supplementary

- Source Code

Visual Comparisons

Sample reconstructed videos in Section V,Table II. The AV1 baseline results are prefixed by 'av1_', and the switchable texture mode results are prefixed by 'switch_'.

Contact

Please address all correspondence to:

- E-mail: zhu0@purdue.edu

Acknowledgment

This work was sponsored by a Google Faculty Research Award and the Google Chrome University Research Program. The webpage template was inspired by this project page.